One of my tasks after the scout summer camp was to go through all photos from it and choose the best to share them on Facebook. It turned out that about 80% of images were crappy and the rest needed some improvements. There are lots of ways how to tweak the photo, and it takes a lot of time to find the best crop, colors, etc. It is frustrating. Wouldn’t it be amazing to have this process automated?

Project’s GitHub: https://github.com/thePetrMarek/AutomaticNeuralImageCropper

My goal of this project is to design system, which takes a photo and automatically modifies it to look the best. This includes cropping and color modifications. I decided not to use smart heuristics only. I create a trainable end-to-end neural model.

My inspiration is the blog post by Andrej Karpathy called What a Deep Neural Network thinks about your #selfie. Andrej Karpathy trained convolutional neural network to recognize good and bad selfies in his work. The best thing from my point of view is that he was able to improve the selfies with this system by cropping them. It works like this. The model outputs the probability that the selfie is good. You make many random crops of the selfie and choose the one with the highest probability being good. Voilà, you have a better selfie. I want to use this principle in my system.

Karpathy’s selfie cropper (http://karpathy.github.io/2015/10/25/selfie/)

Recognizing good and bad pictures

To train any model you needed data first (you know, captain obvious). Data should contain images labeled by a quality measurement like the number of likes, shares, upvotes or rating. You can use AVA: A Large-Scale Database for Aesthetic Visual Analysis for example. I downloaded about 1M various images labeled by the number of likes. Because the number of likes goes from zero to infinity, it doesn’t say anything about the image being good or bad. And that is what we want to predict, right? It holds that more likes mean better image (at least in most cases). But where is the line between good and bad? We can turn to Andrej Karpathy again. His solution to this problem was to shuffle the images, make random batches, sort batches by the number of likes, and label top half of images as good and the bottom half as bad. We label every image as good or bad in this way.

Examples of AVA dataset (http://personal.ie.cuhk.edu.hk/~dy015/ImageAesthetics/Image_Aesthetic_Assessment_files/ava.jpg)

Next step is to design the model. My model is written with the help of Tensorflow. I chose the simple way and used Inception model v4 pre-trained on ImageNet to which I appended fully connected output layer with two classes. My loss function is cross entropy, and I change only parameters of the fully connected layer. Weights of inception model are fixed.

The reason why I don’t fine tune the inception is that I train the model on my laptop with NVIDIA GeForce GTX 960M. I ended up training it on only part of data, approximately 50k images, over several nights (five to seven maybe…). The model achieves accuracy around 58%-60% on the validation set of 1000 images. It seems like a poor performance, but it turns out it is enough in practice (Karpathy had similar performance in his work).

Automatically cropping the image

Next step was to crop the image automatically. The first thing which I tried was to create five random crops, make slight modifications (change size, change position), follow the gradient of the good photo and choose the crop with the best score. The advantage of this method is that it is fast, but the disadvantage is the stochasticity of this process. The result of the process depends on the first five initial crops.

My next approach combats the stochasticity. I create the grid of crops, evaluate each of them and select the best among them. I decided to use square crops only to reduce the number of possible crops. Another benefit is that the inception model takes square images as input, so there is no distortion. My initial mistake was that I allowed too small crops. It often happened, that the model chosen crop of some detail from the picture (leaves in the background of a photo for example). I limited the size of the crop to at least 80% of a smaller side of the image (the sizes of crops are 80%, 90% and 100% of shorter side). This approach significantly increased the quality of crops. The stride of the crop is 1/80 of the photo’s smaller side. This method is slower than the previous one because it needs to evaluate about 1500 crops on average. However, results are better.

The original image is on the top. Bellow, you can see two automatic crops taken with two settings of the system. If the system is allowed to make small crops, the results usually look like the crop on the left. It tends to crop some small unimportant detail. If small crops are forbidden, then the system starts to create more reasonable crops like the one on the right.

Improving colors

I decided to use the system to improve the colors of images too. I do it after cropping. I try several values of brightness, contrast, and color from ImageEnhance of Pillow library. The procedure is the same as in the case of cropping. Try all possible values independently for each property and choose the one with the highest probability of being good predicted by the model. The key is to limit the possible values again. The first version with all values sometimes produced too dark or too bright images. I use only values between 0.75 and 1.25 with a step size of 0.1 (1 is the original image) for all three values. This procedure is fairly quick because there are only 150 possible values to try (50 for each value).

We can now move to the most interesting part, to the results of the system.

Good examples

Photos from the internet

I show examples of crops on the photos from the internet. They seem to be taken by professional photographers, and they have probably been modified already.

It seems that single human is cropped most of the times correctly (www.pexels.com)

The system correctly located cat’s head. (www.pexels.com)

Crop of helicopter (www.pexels.com)

There is a trace of some filter in this photo, but someone may say that the colors of the original are better. (www.pexels.com)

London’s crop (www.pexels.com)

Non-modified photos

I show below the crops on the pictures taken by my friends. They aren’t professional photographers. They were modified only minimally.

This crop of golden gate bridge turned pretty good. Notice, that the tower and bridge platform lie in the thirds of the picture.

The model was able to locate a person in the forest in this case. The green is also “more green.”

The model was able to locate the most interesting part of the image and place it in the third.

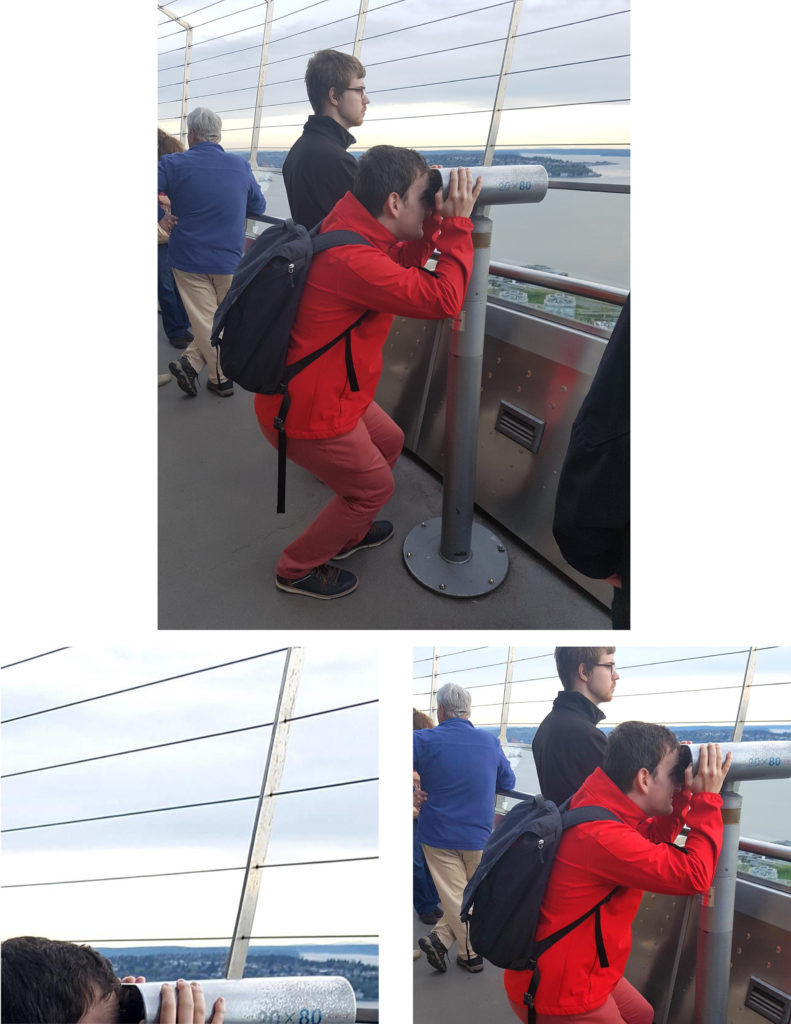

Crop of Seattle’s downtown

Perfect profile picture

Problematic examples

The system is not perfect all the times. Main problems are shown below.

One of the biggest problems is, that system is not able to locate the main object in the photo (the motorcyclist in this case). The combination of blue sky and orange sand is maybe interesting, but it is not the reason why this photo was taken. This is probably the most common problem. (www.pexels.com)

Group pictures sometimes tend to be split in between of people.

Sometimes a group is completely cut away.

And sometimes system decides to use some crazy colors.

Conclusion

This work shows that it is possible to create an end to end system for improving images by cropping them and improving their colors. One of the possible improvements can be to fine tune the Inception model, or to speed the model up by using some simpler pre-trained model as Mobilenet. An interesting possibility would be to use the model in camera app to display the score of a photo (or a number of possible likes) in real time. This system can also be used to create an image or video thumbnails.

hi,i read your github and this blog. The work u have down is amazing. I am a student want to learn from your work.But your checkpoint of Inception is not found in github.can you help me .

Hello,

you can download the Inception model from https://github.com/tensorflow/models/tree/master/research/slim

I am a B-Tech student, i have a project similar to this. I need to study this code can you explain this code