I took optional online course “Deep learning” on Udacity this semester. I learned how deep, convolution and recurrent neural networks work, how to use them and how to write them using the Tensorfow. The final project of this course is to build live camera app. The app should be able to recognize sequences of digits. This post is intended as the record of the steps which I took to build it.

The second part

The code is available on GitHub.

Update 2.7.2017

Single digit recognition

I decide to start with the recognition of single digit from Mnist dataset. Splits to training, validation and testing sets are of sizes 55000, 5000 and 10000. I take these splits directly from Tensorflow.

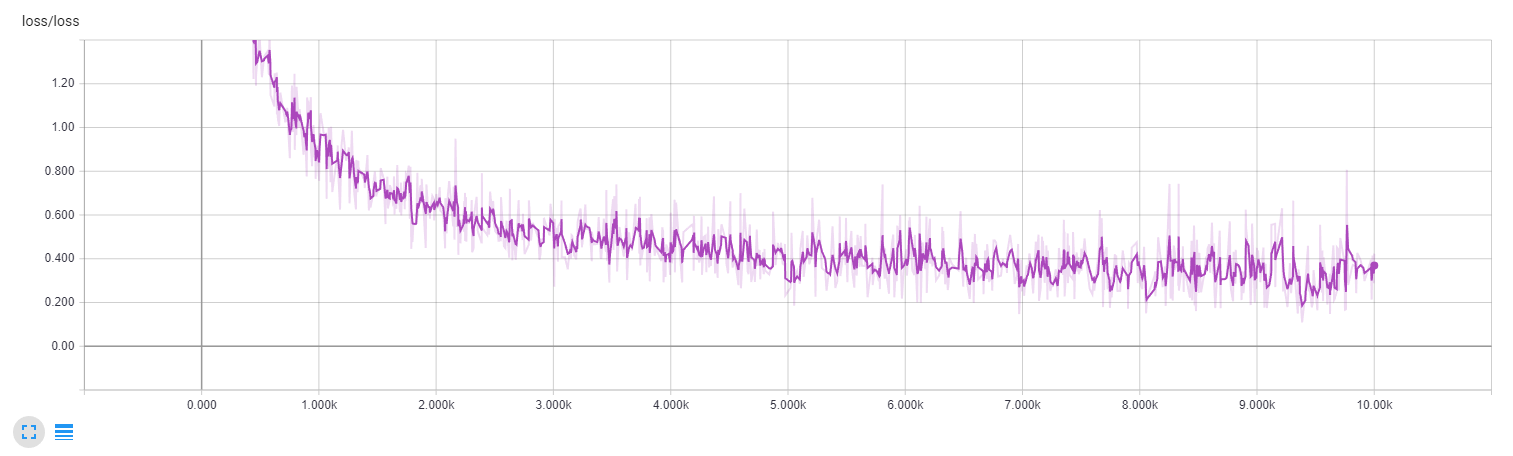

Single layer neural network

My first experiment is to write single layer neural network to heat up. The input size is 768 and output size corresponds to 10 classes. The neural network is learning just fine. It achieves accuracy on testing set is 0.91 and cross entropy loss is 0.42. I use 10000 steps, batch size of 50 and Adam optimizer with learning rate 0.001.

Single layer test accuracy

Single layer loss

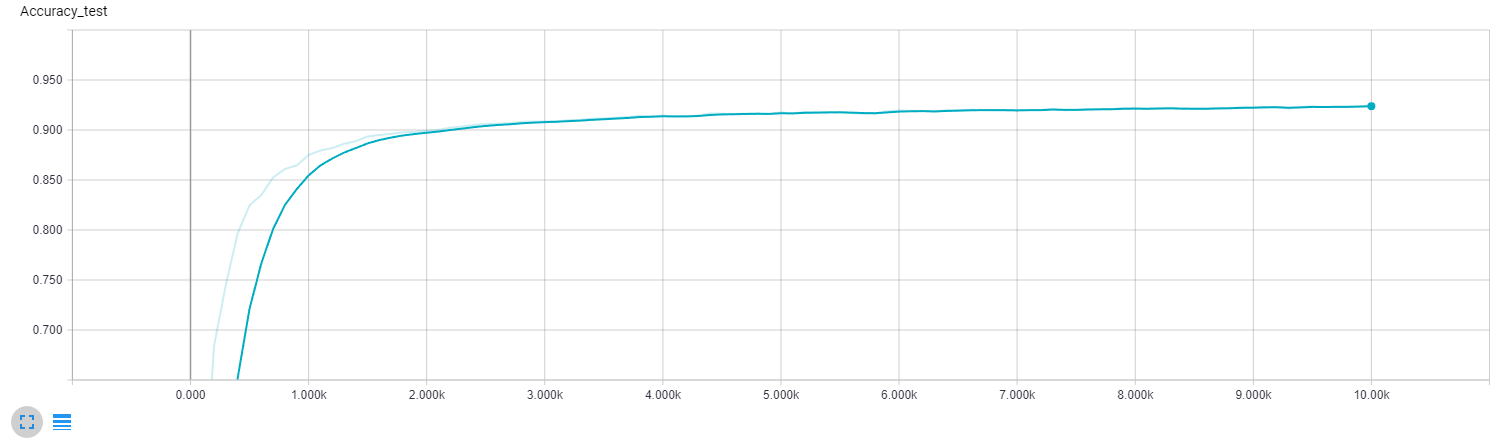

Two layers neural network

The second experiment is to use two layer network to test whether my implementation can correctly connect the layers. The input size of the first layer is 768, the output is 64. The output of the second layer is 10. The neural network learns a little bit better this time. It achieves accuracy on testing set is 0.92 and cross entropy loss is 0.41. I use same hyperparameters as in the case of the single layer.

Two layers test accuracy

Two layers loss

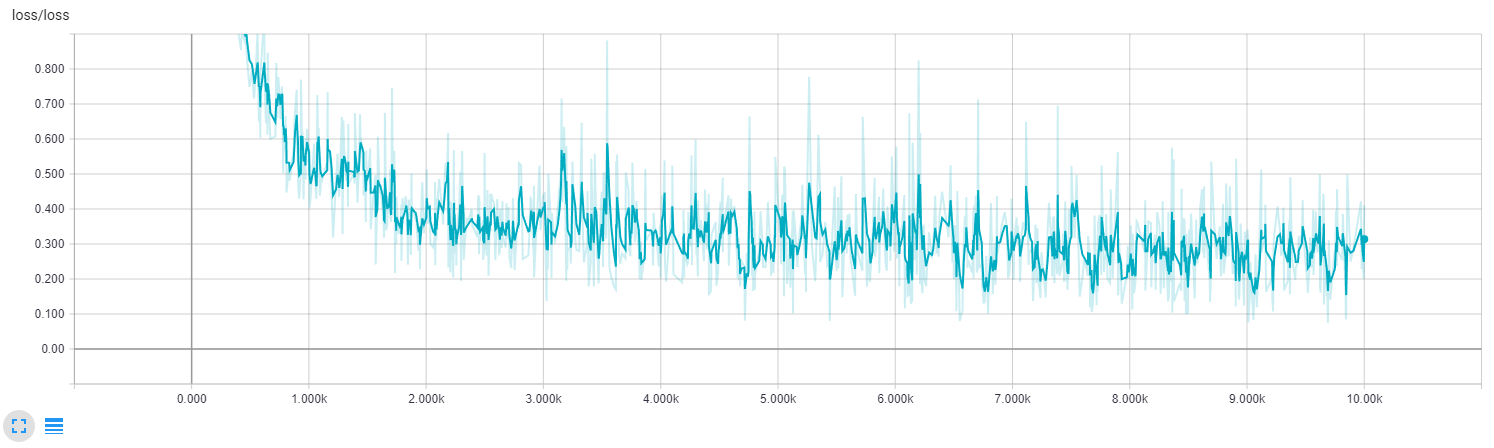

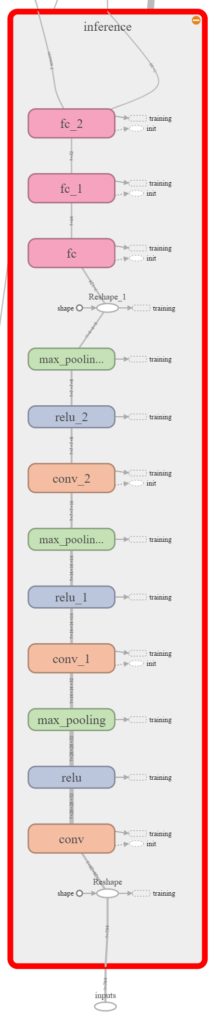

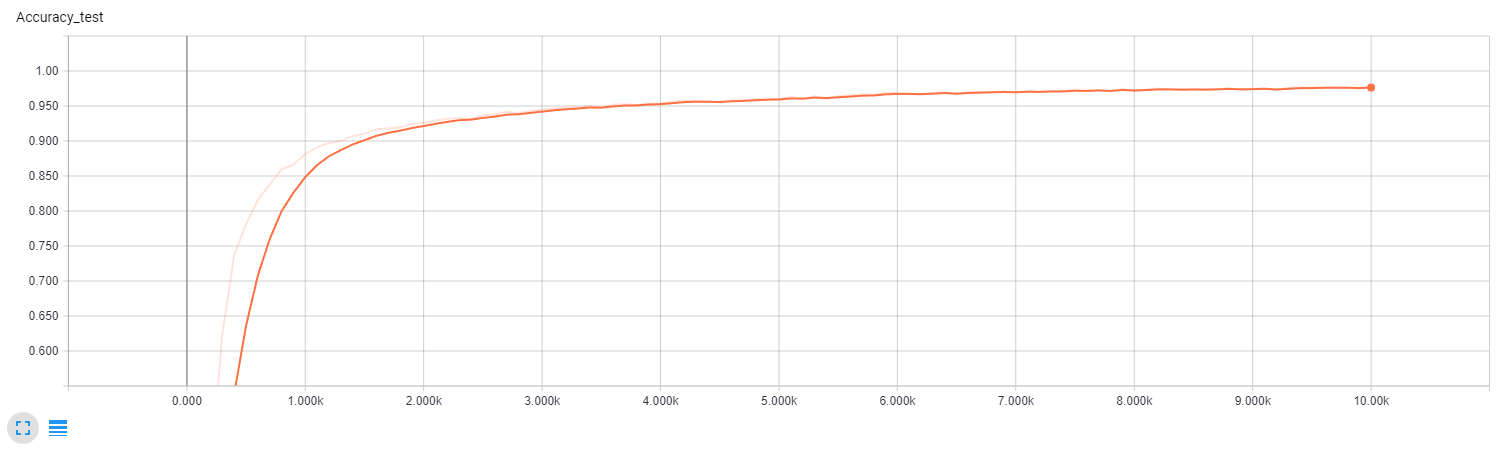

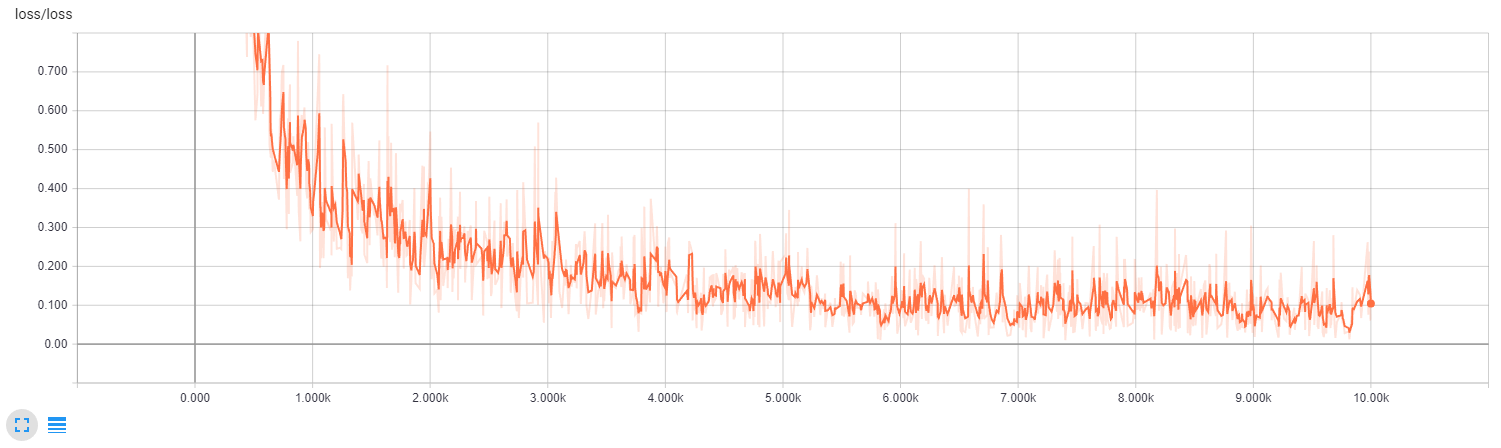

Convolutional neural network

The last experiment is to train convolutional neural network. The network consists of three layers of convolution, relu and max pooling followed by three fully connected layers. The first convolution has the size of 5×5 and 32 filters. The second has the size of 3×3 and 16 filters. The last has the size of 2×2 and 8 filters. The pooling is 2×2 for all convolutional layers. The input and output dimensions of fully connected layers are [128,64], [64,32] and [32,10]. The rest of hyperparameters are the same as in the case of feedforward networks.

Convolutional neural network

Convolutional network achieves the accuracy of 0.97 on the testing dataset. The loss is 0.06.

Convolutional test accuracy

Convolutional loss

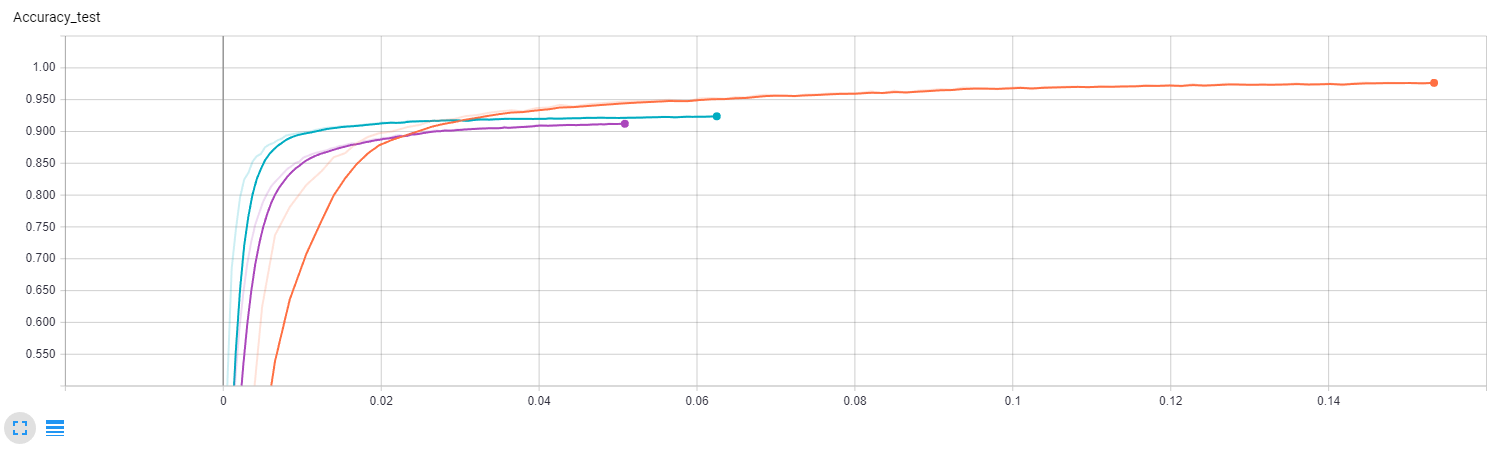

Conclusion

The convolutional network achieves the best results as expected. However, training of convolutional neural network takes three times more time (3m 3s, 3m 45s, and 9m 12s).

Test accuracy in time (single layer – purple, two layers – green, convolutional – orange)

Update 5.7.2017

Mnist sequence recognition

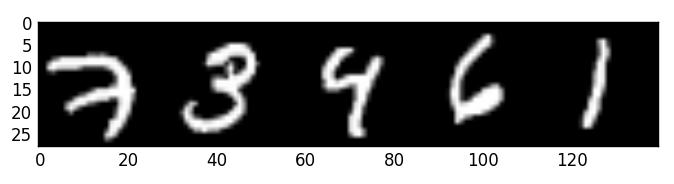

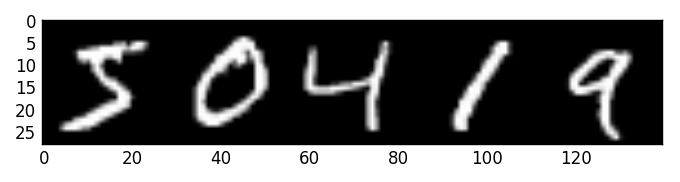

I move to the recognition of sequences. I create a dataset containing sequences of five digits by concatenating of digits from mnist. The training part of the dataset has a size of 165000, validation has 15000 examples and testing 30000. I don’t mix the examples from original mnist datasets. This means that training dataset of sequences is created by examples from original training dataset only. The same is true for validation and testing sets.

Example from training set

Example from validation set

Example from testing set

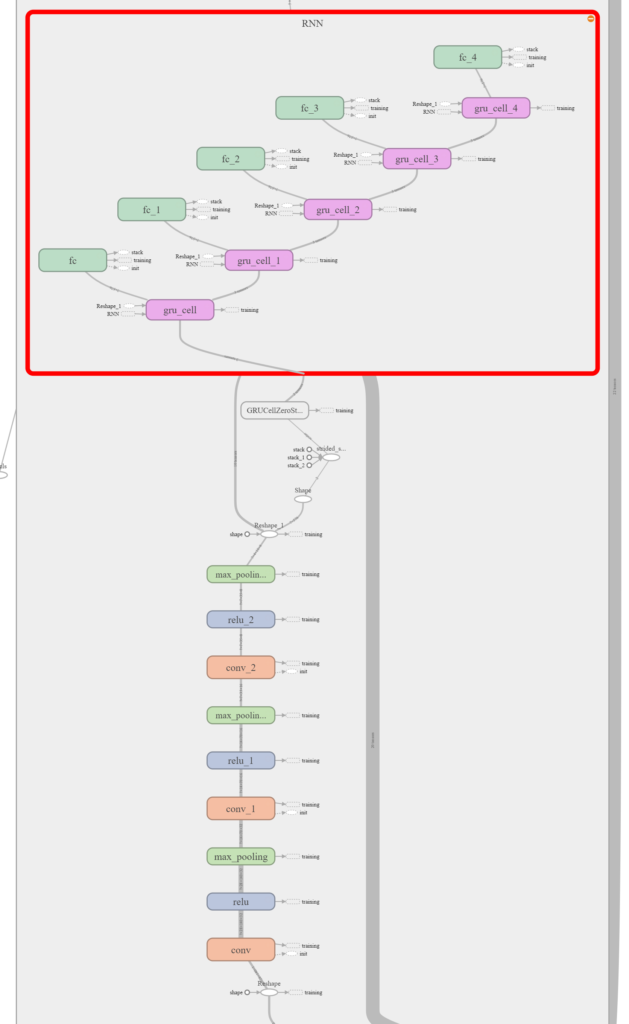

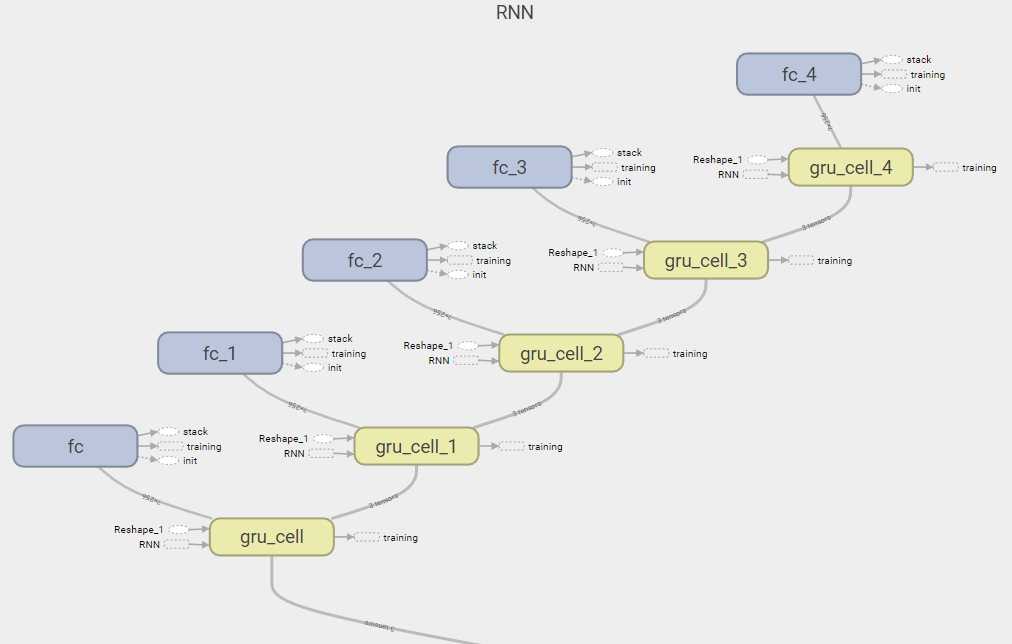

Recurrent neural network

I extend the convolutional architecture to recognize the sequences by replacing the fully connected layer at the end by the recurrent layer. The recurrent cell is GRU with 576 units, which is unrolled five times. The input to GRU is the output from the convolution each time step. The output is sent to fully connected layer. The hyperparameters are the same again (10000 learning steps, the batch size of 50, Adam optimizer with the learning rate of 0.0001).

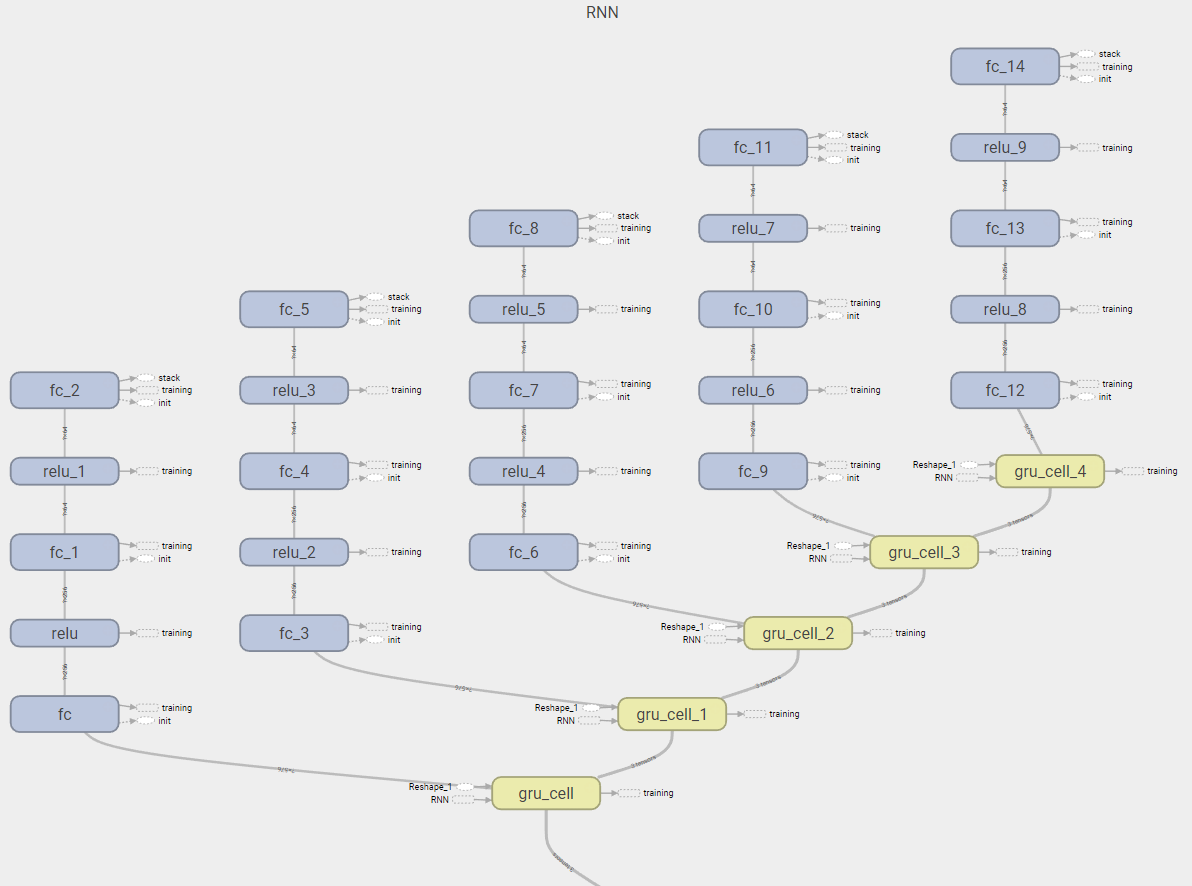

Sequence network

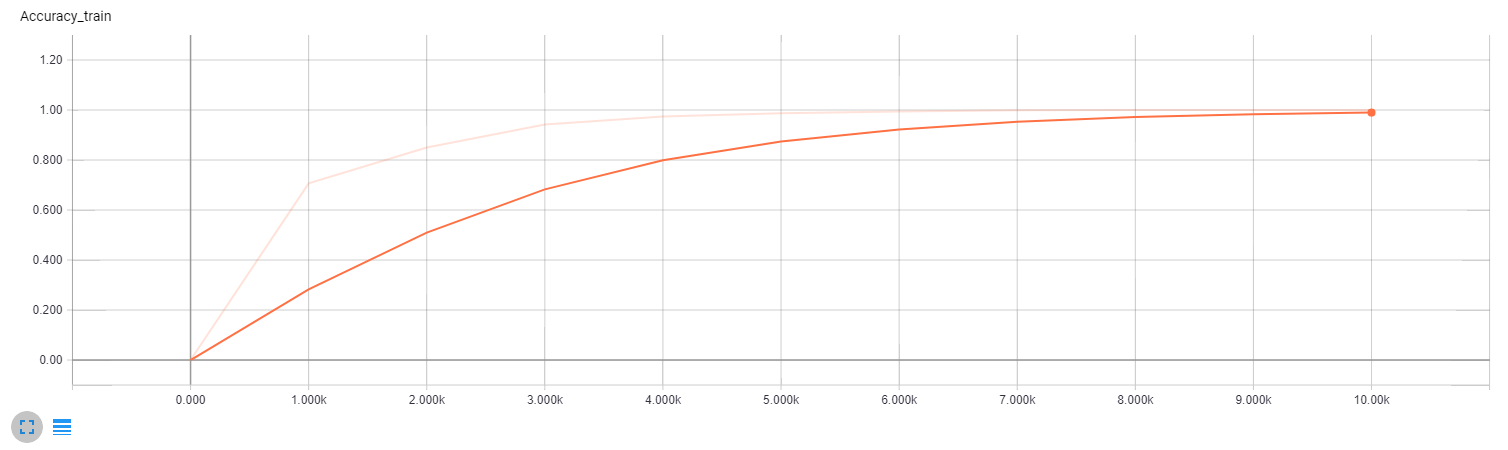

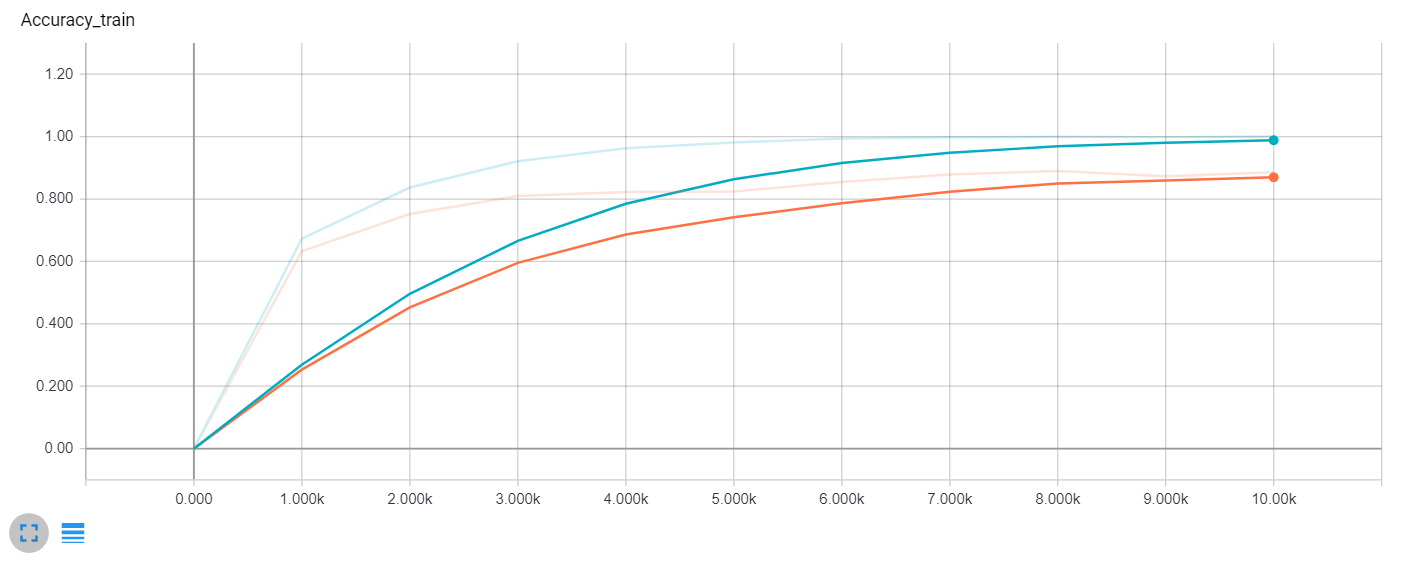

The correct recognition has all digits of sequence correctly recognized. The model learns the training set completely. It achieves 100% accuracy on the training set. This together with loss dropping to zero can be a sign of overfitting. The accuracy on the validation and test sets are 0.80 and 0.82 respectively.

Sequence model training accuracy

Sequence model validation accuracy

Sequence model test accuracy

Sequence model loss

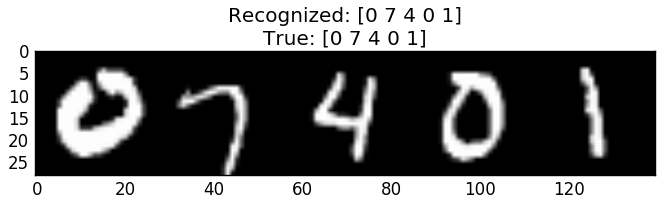

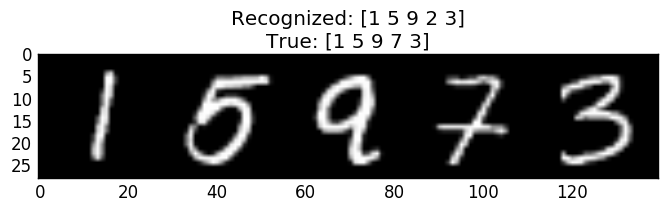

I checked the recognitions. Model is really capable of learning to recognize sequences. However, it makes some really “dumb” mistakes. It also seems, that most mistakes are made in single or two digits. I don’t do any precise analysis to find out, how big is a percentage of these mistakes. I propose this “fact” by checking several wrong recognitions. You can see examples of correctly and incorrectly recognized sequences below.

Sequence model correct recognition of example from testing set

Sequence model incorrect recognition of example from testing set with single mistake

Sequence model incorrect recognition of example from testing set with two mistakes

Update 6.7.2017

Recurrent neural network with dropout

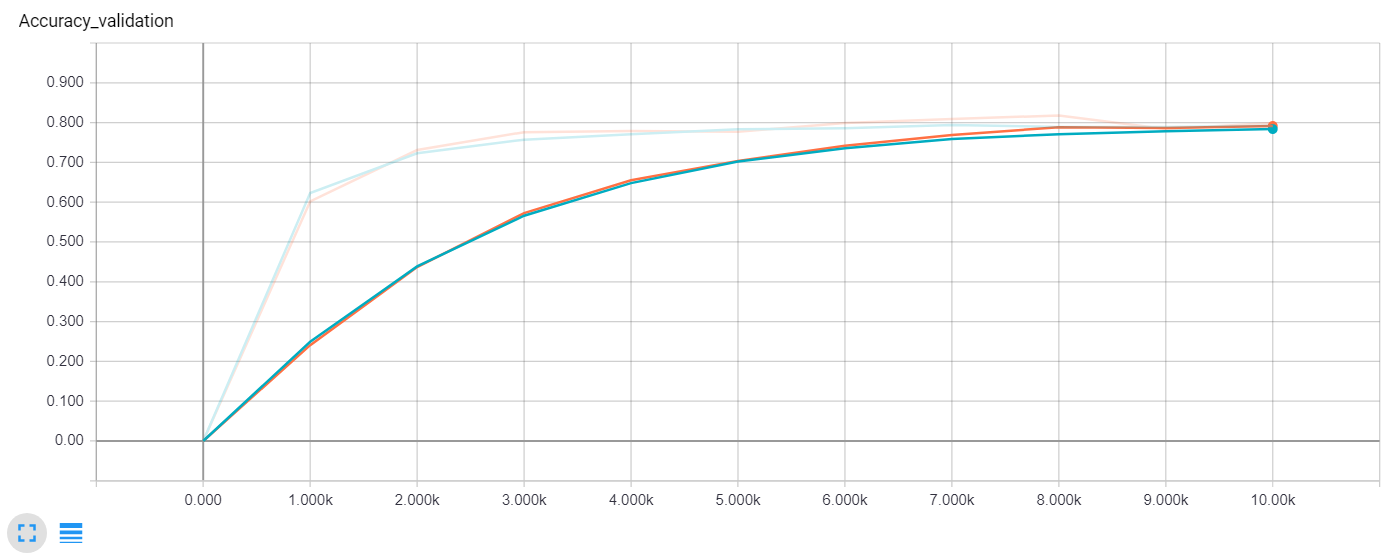

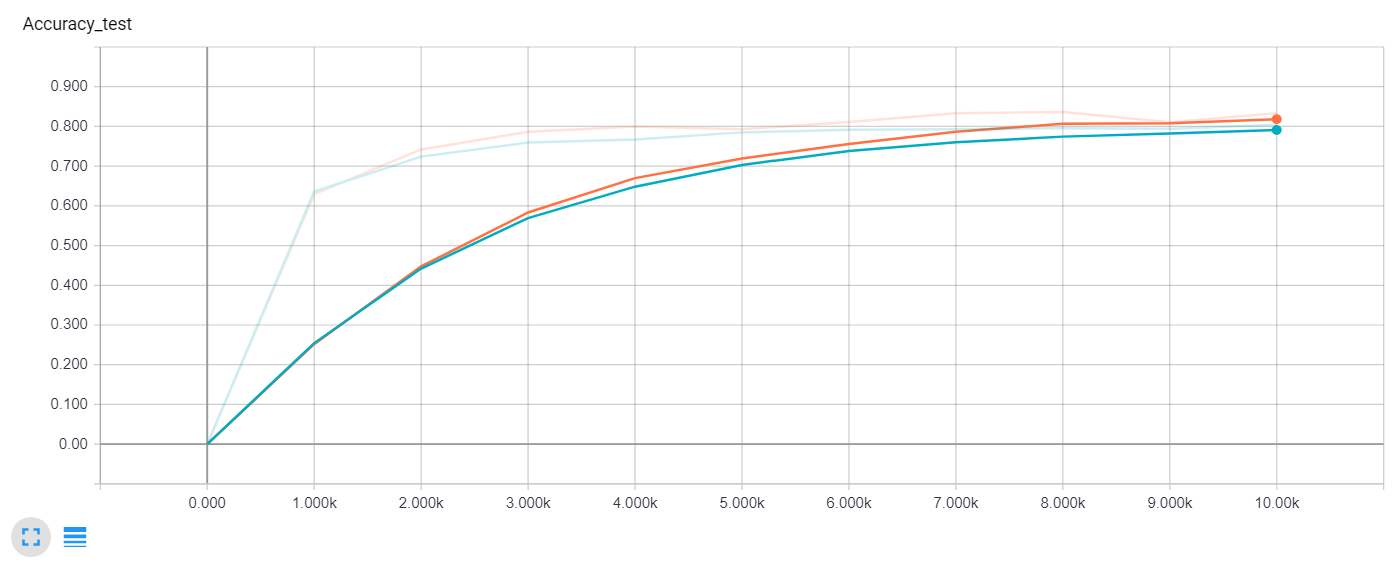

My next step is to fight overfitting. I use dropout to convolutional layers with keep probability of 0.5. Model with dropout achieves better accuracy on the testing set, the same on validation and the worse accuracy on the testing set (0.88 training, 0.79 validation, 0.83 testing). The hyperparameters are the same again.

Train accuracy comparison (Blue – no dropout, Orange – dropout)

Validation accuracy comparison (Blue – no dropout, Orange – dropout)

Test accuracy comparison (Blue – no dropout, Orange – dropout)

Loss comparison (Blue – no dropout, Orange – dropout)

Update 8.7.2017

Recurrent neural network with dropout and more training steps

I try more learning steps because the problem with the overfitting is gone. I select 30k learning steps instead of 10k. The dropout keep probability of 0.5 and other hyperparameters are the same. More learning steps help. Atchieved accuracies are 0.97 on the training set, 0.85 on the validation set and 0.85 on the testing set.

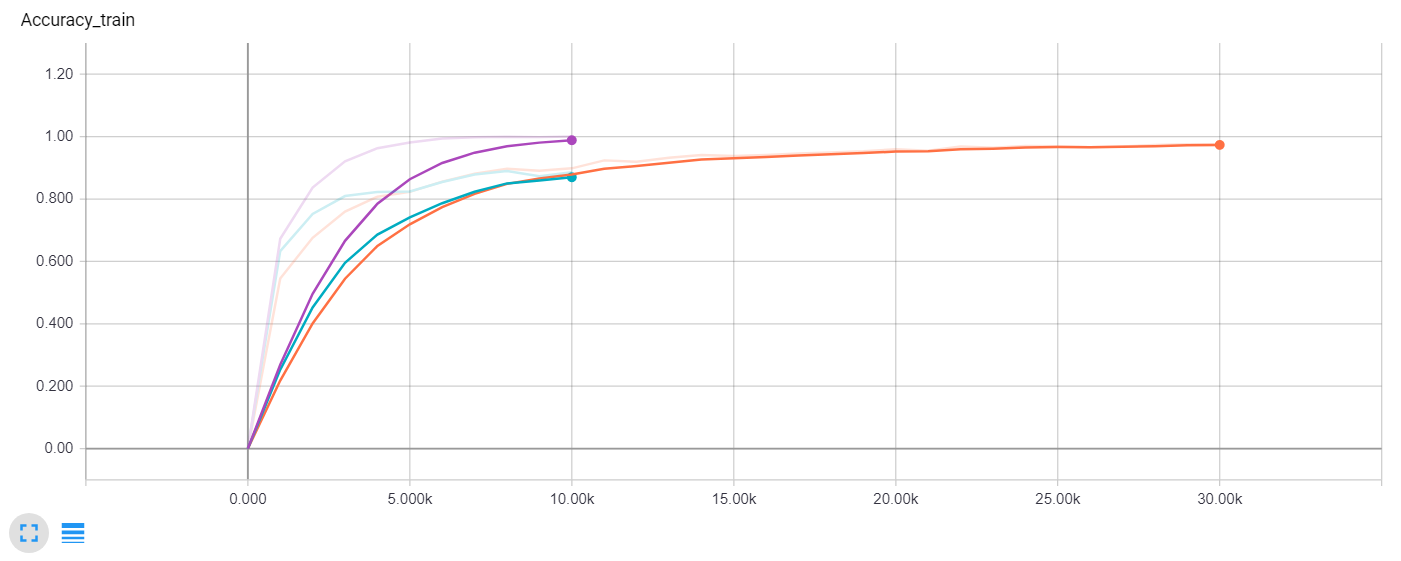

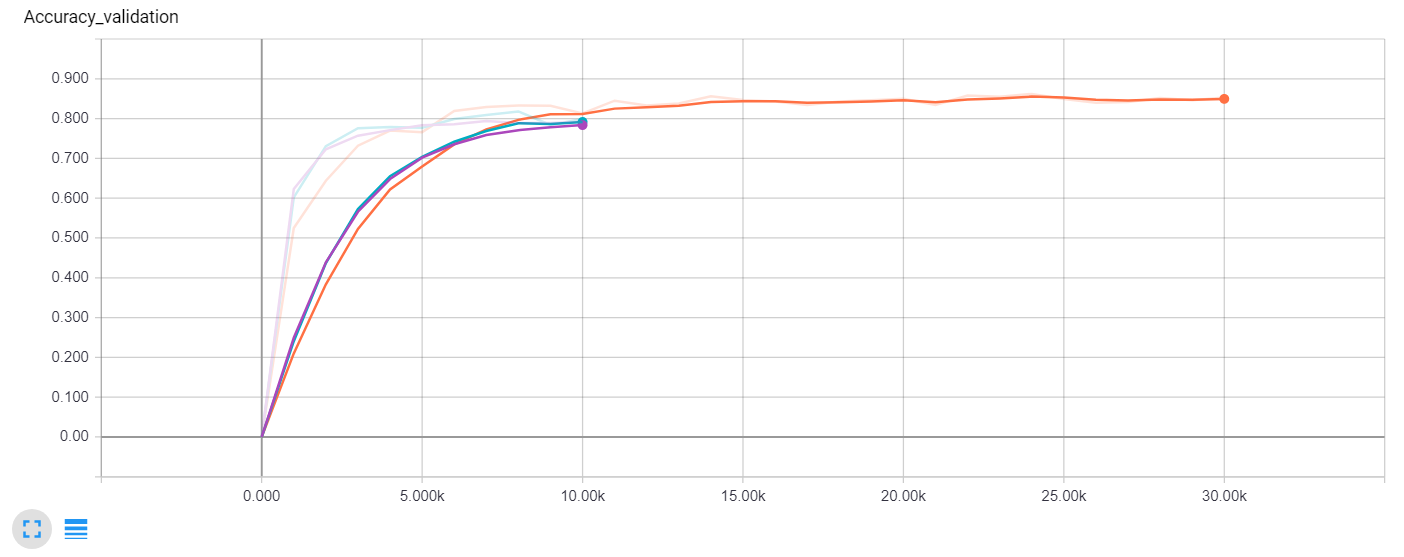

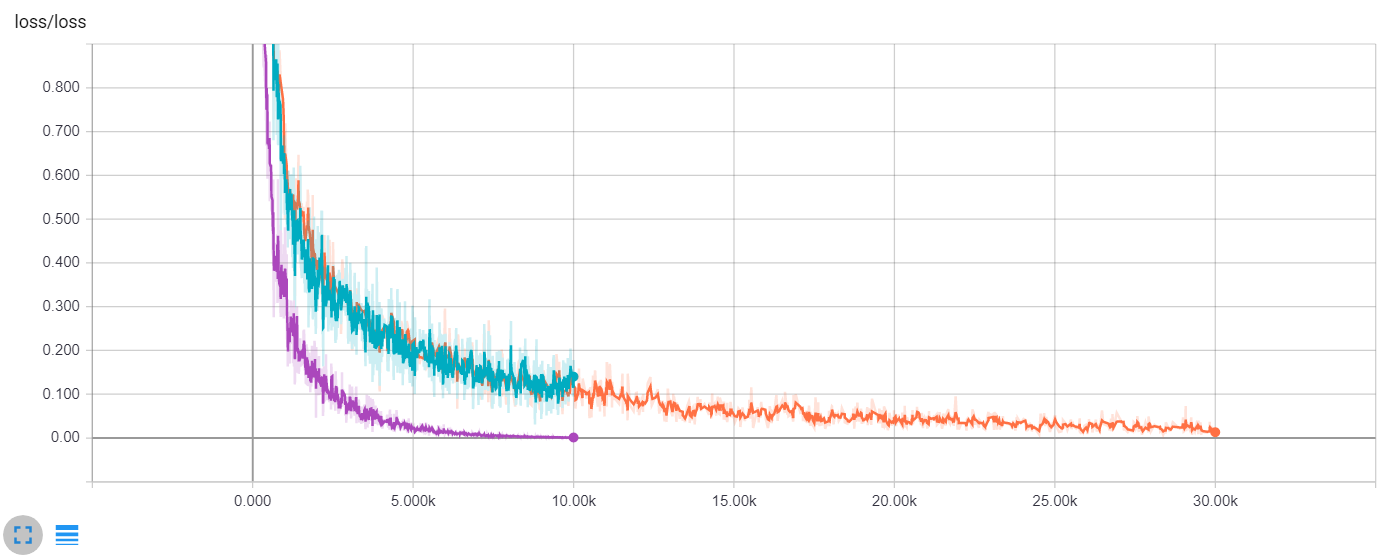

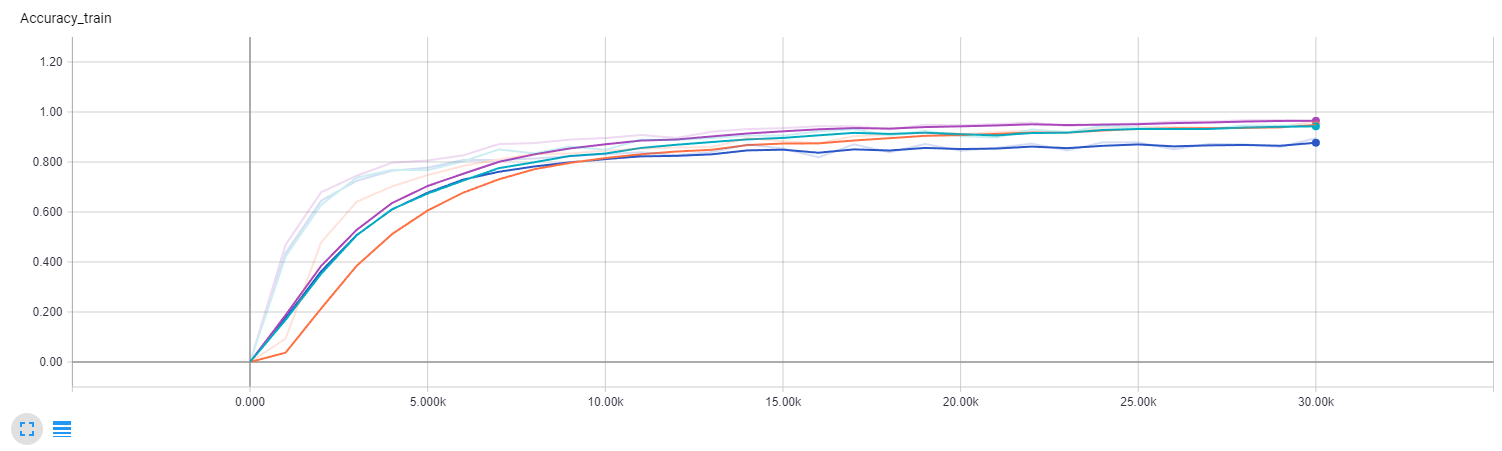

Train accuracy comparison (Purple – no dropout, Green – dropout 10k steps, Orange – dropout 30k steps)

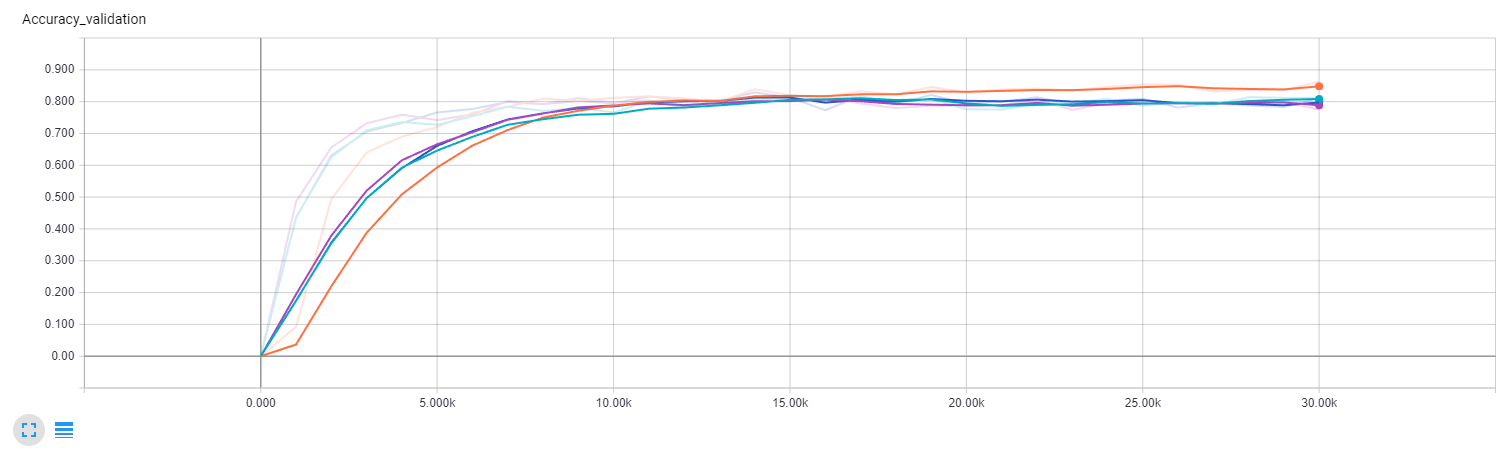

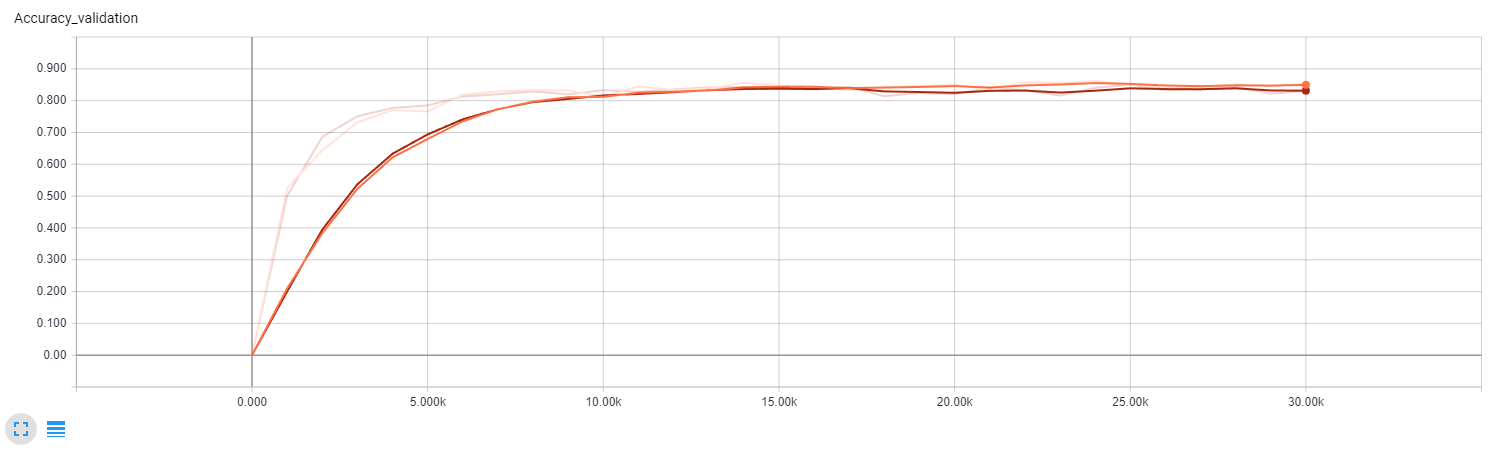

Validation accuracy comparison (Purple – no dropout, Green – dropout 10k steps, Orange – dropout 30k steps)

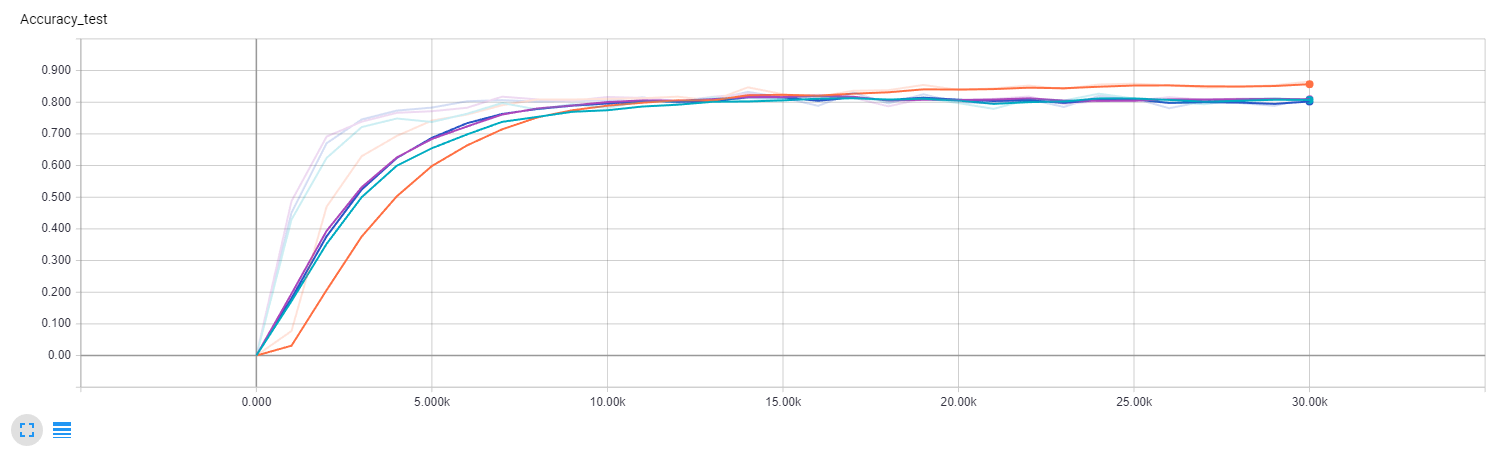

Test accuracy comparison (Purple – no dropout, Green – dropout 10k steps, Orange – dropout 30k steps)

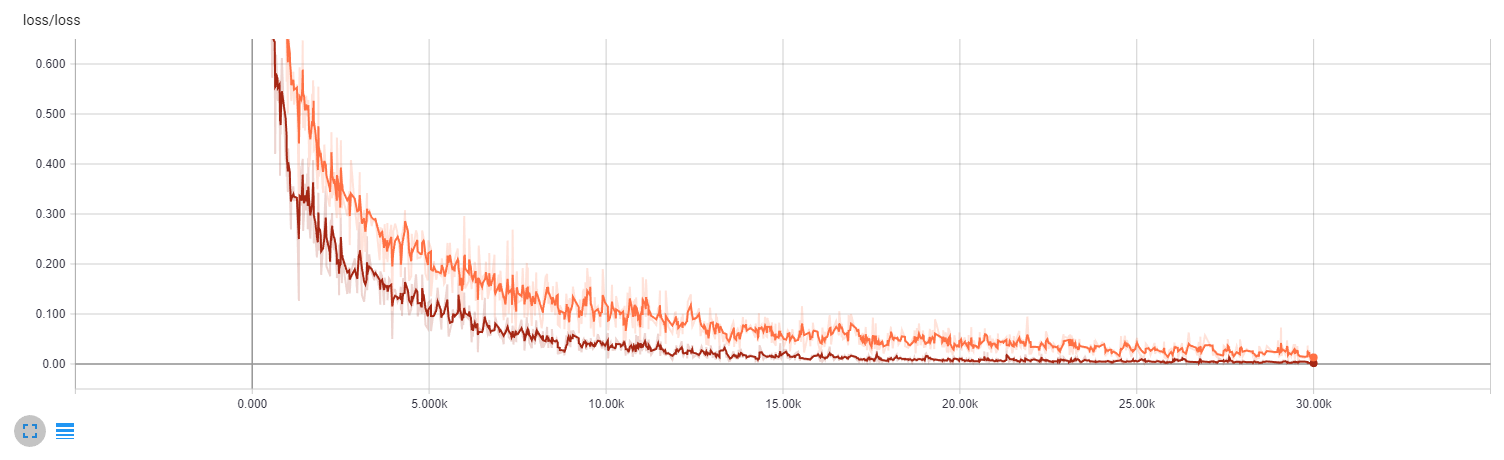

Loss comparison (Purple – no dropout, Green – dropout 10k steps, Orange – dropout 30k steps)

Update 21.7.2017

Improving the recurrent part of the network

I explore the opportunities to improve the performance by changing the recurrent part of the network. My current recurrence is five times unrolled GRU cell of size 576 followed by fully connected layer.

Baseline recurrent layer

The first experiment is to increase the size of GRU cell from 576 to 1024. The second experiment is to increase the size of GRU cell from 576 to 256. The size of single connected layer changes to match the size of GRU cell.

The next experiment is to increase the number of fully connected layers. The size of GRU cell is 576. I use three layers of sizes [576,256], [256,64] and [64,10]. Ther is relu activation function between layers.

Recurrent layer with three output layers

I use dropout in the last experiment because I fear of overfitting. Dropout is between first and second, and second and third layer. The keep probability is 0.5.

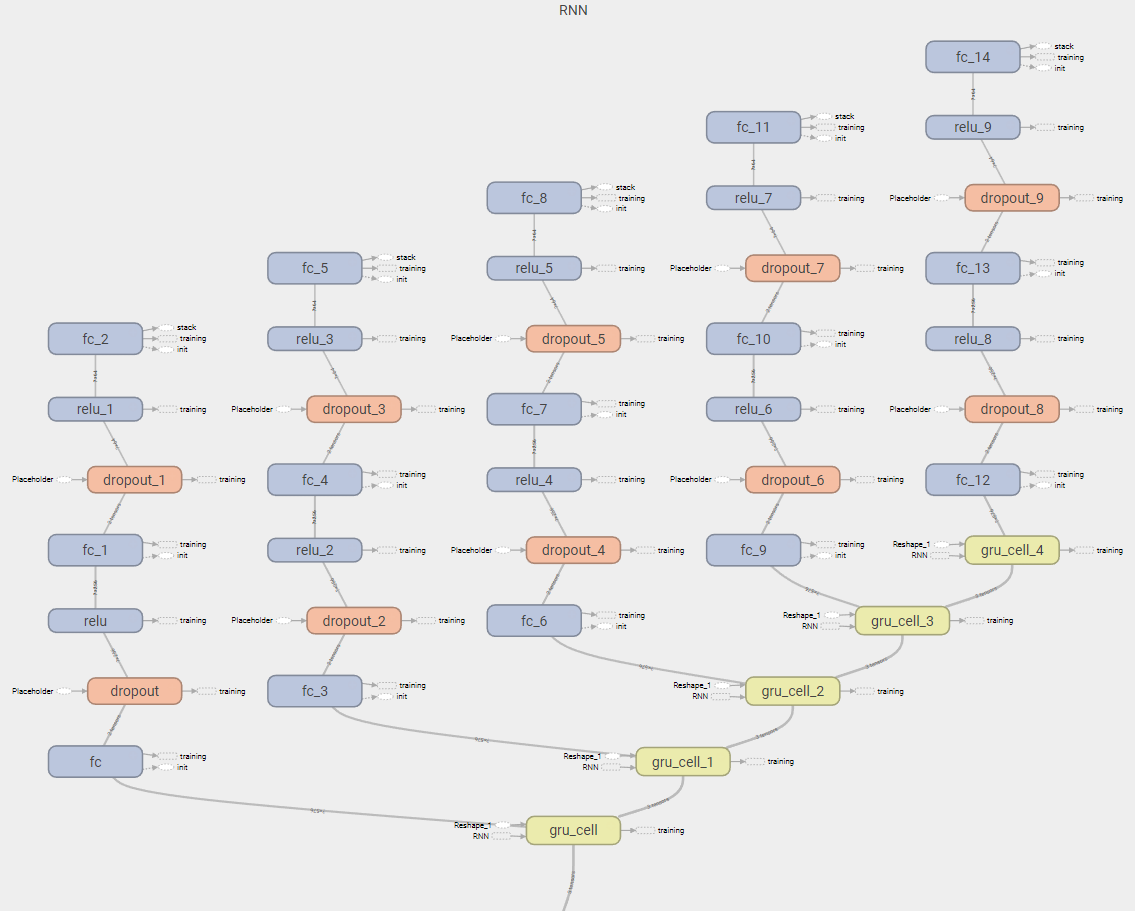

Recurrent layer with three output layers and dropout

The convolutional layer and hyperparameters stay the same in all four experiments. The first three experiments don’t improve accuracy. Only the fourth experiment brings slightly better results than the best model up to this point.

| Experiment/Dataset accuracy | Training | Validation | Testing |

| Best model up to this point | 0.97 | 0.85 | 0.85 |

| Smaller GRU cell size | 0.89 | 0.81 | 0.81 |

| Bigger GRU cell size | 0.96 | 0.77 | 0.80 |

| More output fully connected layers | 0.94 | 0.81 | 0.80 |

| More output fully connected layers with dropout | 0.96 | 0.86 | 0.86 |

Recurrence experiments train accuracy (Blue – smaller GRU cell size, Purple – bigger GRU cell size, Green – more output fully connected layers, Orange – more output fully connected layers with dropout)

Recurrence experiments validation accuracy (Blue -smaller GRU cell size, Purple – bigger GRU cell size, Green – more output fully connected layers, Orange – more output fully connected layers with dropout)

Recurrence experiments test accuracy (Blue – smaller GRU cell size, Purple – bigger GRU cell size, Green – more output fully connected layers, Orange – more output fully connected layers with dropout)

Recurrence experiments loss (Blue – smaller GRU cell size, Purple – bigger GRU cell size, Green – more output fully connected layers, Orange – more output fully connected layers with dropout)

My conclusion is, that the manipulation with recurrent layer doesn’t help too much. I will focus on the convolution part of the neural network. It looks promising to increase the number of convolutional layers as was suggested in the paper Multi-digit Number Recognition from Street View Imagery using Deep Convolutional Neural Networks.

Update 18.7.2017

Reshaped convolution

I apply changes to the shapes of convolutional layers. My friend Long Hoang Nguen (a.k.a. Roman) suggested me, that the first layers should have fewer filters and bigger pooling layers and later layers should have more filters and smaller pooling. The reason is, that the network needs at first capture the basic features of the image like lines, and reduce the dimensionality quickly. I rearrange the original convolutions into 10×10 with 8 filters in the first layer, 5×5 with 16 filters and 2×2 with 32 filters. The rest remains the same.

The network atchieves worse results than the original network (no changes in the recurrent part are applied from the previous update). The results are in the table below.

| Experiment/Dataset accuracy | Training | Validation | Testing |

| Original network | 0.97 | 0.84 | 0.85 |

| Reshaped convolution | 0.97 | 0.83 | 0.83 |

Train accuracy of original (Orange) and reshaped convolutions (Brown)

Validation accuracy of original (Orange) and reshaped convolutions (Brown)

Test accuracy of original (Orange) and reshaped convolutions (Brown)

Loss of original (Orange) and reshaped convolutions (Brown)

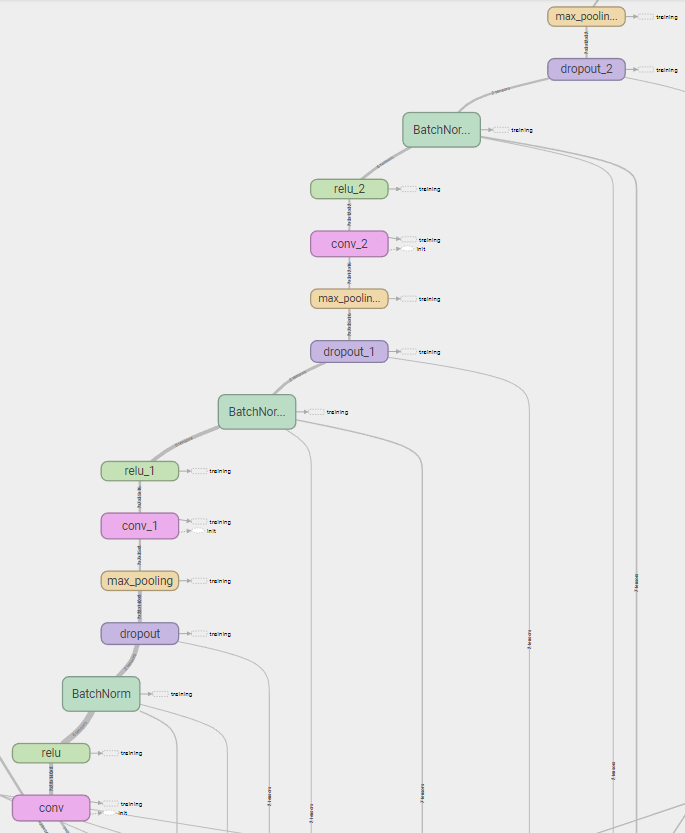

Batch normalization

Roman suggested me the batch normalization too. I add batch normalization between the convolutional layers of “reshaped convolution” model. The decay parameter is set to 0.9. I change the dropout keep probability from 0.5 to 0.8, because the performance is very poor with 0.5.

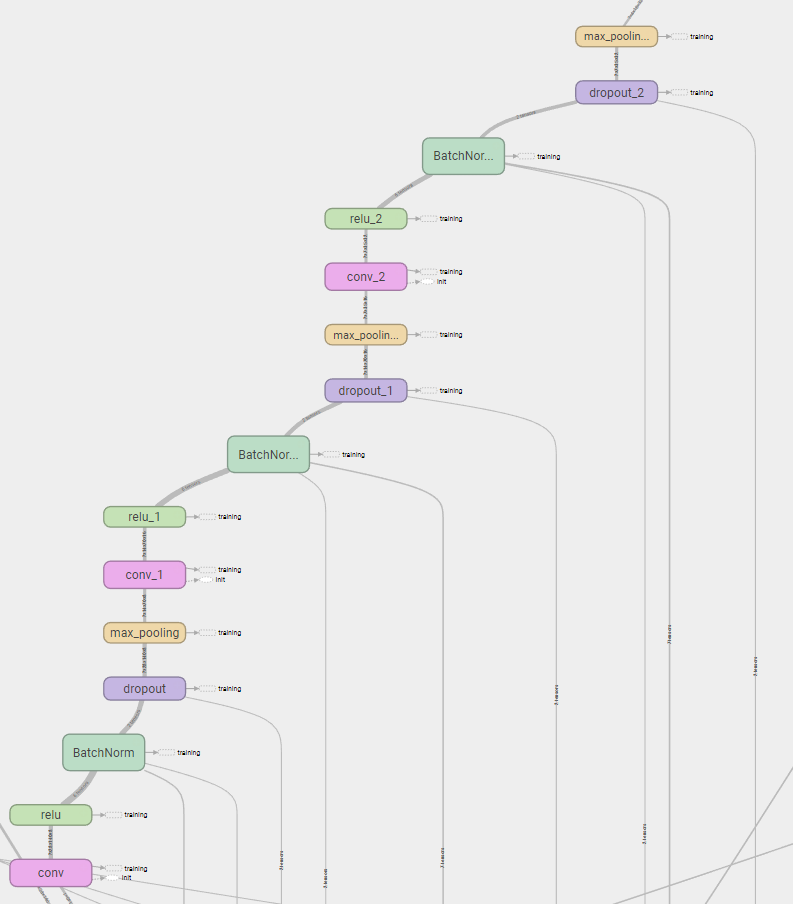

Convolution part of network with batch normalization

This model achieves the best accuracy on the testing set (88%). The batch normalization brings 2% of accuracy the on testing set (86% without batch normalization). I also test setting the keep probability of dropout to 0.85 and 0.75. It doesn’t improve accuracy. The results are in the table below.

| Experiment/Dataset accuracy | Training | Validation | Testing |

| No batch normalization, 0.8 keep probability | 1.0 | 0.85 | 0.86 |

| Batch normalization, 0.8 keep probability | 1.0 | 0.86 | 0.88 |

| Batch normalization, 0.85 keep probability | 1.0 | 0.87 | 0.87 |

| Batch normalization, 0.75 keep probability | 0.99 | 0.87 | 0.86 |

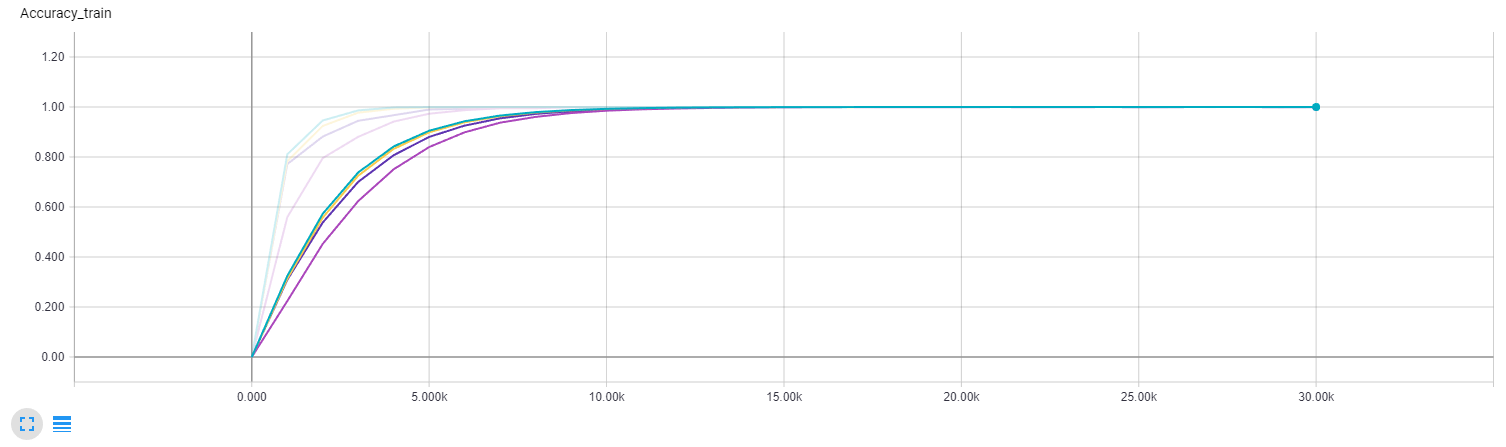

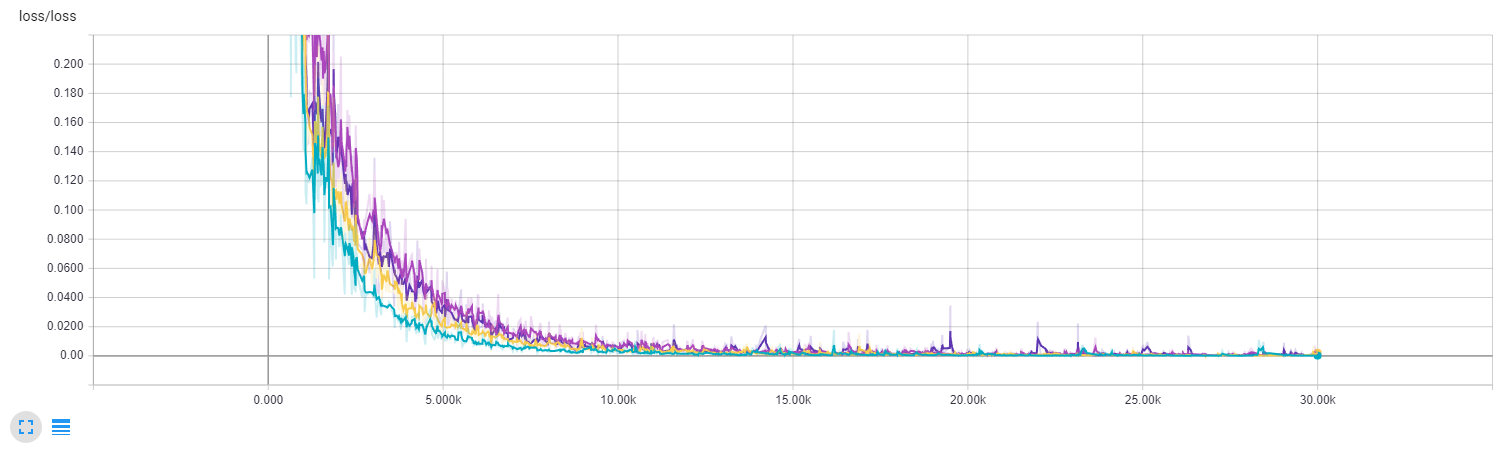

Train accuracy comparison (Yellow – batch normalization, keep probability 0.8; Dark purple – no batch normalization, keep probability 0.8; Green – batch normalization, 0.85 keep probability; Light purple – batch normalization, 0.75 keep probability)

Validation accuracy comparison (Yellow – batch normalization, keep probability 0.8; Dark purple – no batch normalization, keep probability 0.8; Green – batch normalization, 0.85 keep probability; Light purple – batch normalization, 0.75 keep probability)

Test accuracy comparison (Yellow – batch normalization, keep probability 0.8; Dark purple – no batch normalization, keep probability 0.8; Green – batch normalization, 0.85 keep probability; Light purple – batch normalization, 0.75 keep probability)

Loss comparison (Yellow – batch normalization, keep probability 0.8; Dark purple – no batch normalization, keep probability 0.8; Green – batch normalization, 0.85 keep probability; Light purple – batch normalization, 0.75 keep probability)

Deeper convolution models

I experiment with the deeper convolution. The first variant is the network with four convolutional layers (I have not used batch normalization yet when I tested it). The size of convolutional layers are 10×10 with 8 filters, followed by relu, dropout with keep probability of 0.5, 2×2 max pooling, 5×5 convolution with 16 filters, relu, dropout, 2×2 max pooling, 2×2 convolution with 24 filters, relu, dropout, 2×2 max pooling, 2×2 convolution with 32 filters, relu and dropout.

Deeper model architecture

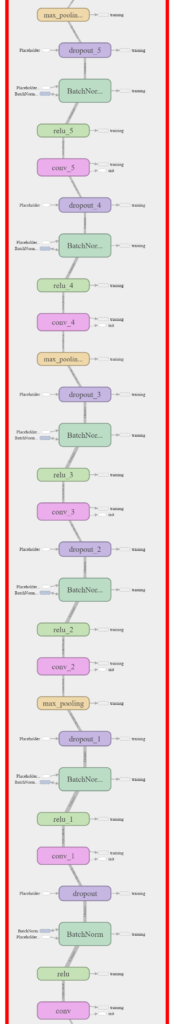

The next experiment tests network with doubled convolutional layers. It consists of 10×10 convolution with 8 filters, relu, batch normalization, dropout, 10×10 convolution with 8 filters, relu, batch normalization, dropout, 2×2 max pooling, 5×5 convolution with 16 filters, relu, batch normalization, dropout, 5×5 convolution with 16 filters, relu, batch normalization, dropout, 2×2 max pooling, 2×2 convolution with 32 filters, relu, batch normalization, dropout, 2×2 convolution with 32 filters, relu, batch normalization, dropout and 2×2 max pooling. The keep probability is 0.8 and decay is 0.9.

Double model architecture

The last experiment tests overlapping max pooling as proposed in the ImageNet Classification with Deep Convolutional Neural Networks. The paper reported 0.4% increase of accuracy on their task. The network consists of 10×10 convolution with 8 filters, relu, batch normalization, dropout, 5×5 max pooling with stride of 4×4, 5×5 convolution with 16 filters, relu, batch normalization, dropout, 4×4 max pooling with stride of 3×3, 2×2 convolution with 32 filters, relu, batch normalization, dropout, 2×2 max pooling with stride of 1×1. The keep probability is 0.8 and decay is 0.9.

Overlapping stride architecture

None of these models achieve better results than the model with batch normalization. The results are shown in the table below.

| Experiment/Dataset accuracy | Training | Validation | Testing |

| Batch normalization, 0.8 keep probability | 1.0 | 0.86 | 0.88 |

| Deeper convolution | 0.97 | 0.80 | 0.80 |

| Double convolution | 0.99 | 0.88 | 0.86 |

| Overlapping stride | 0.98 | 0.81 | 0.81 |

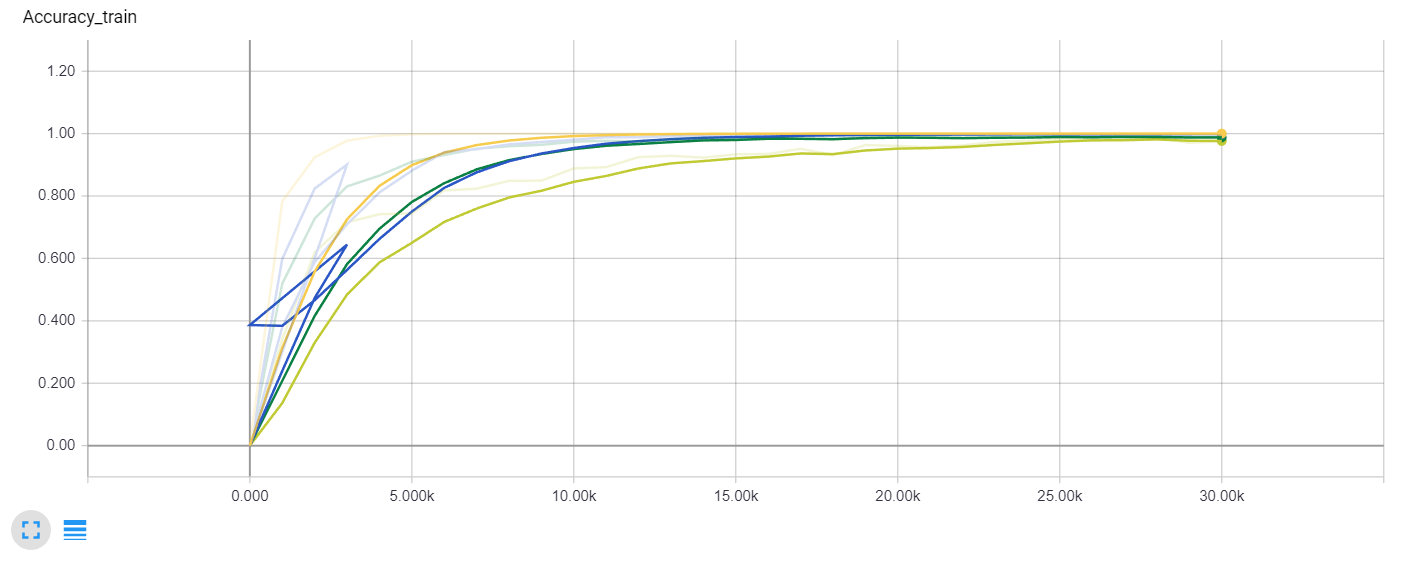

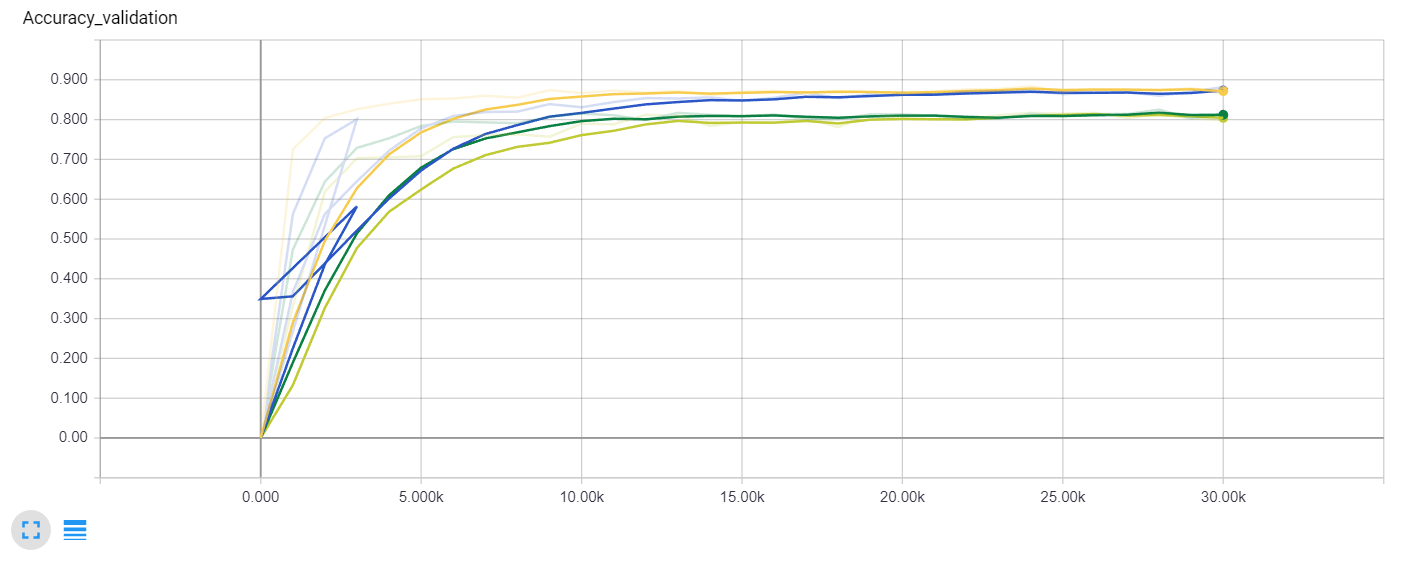

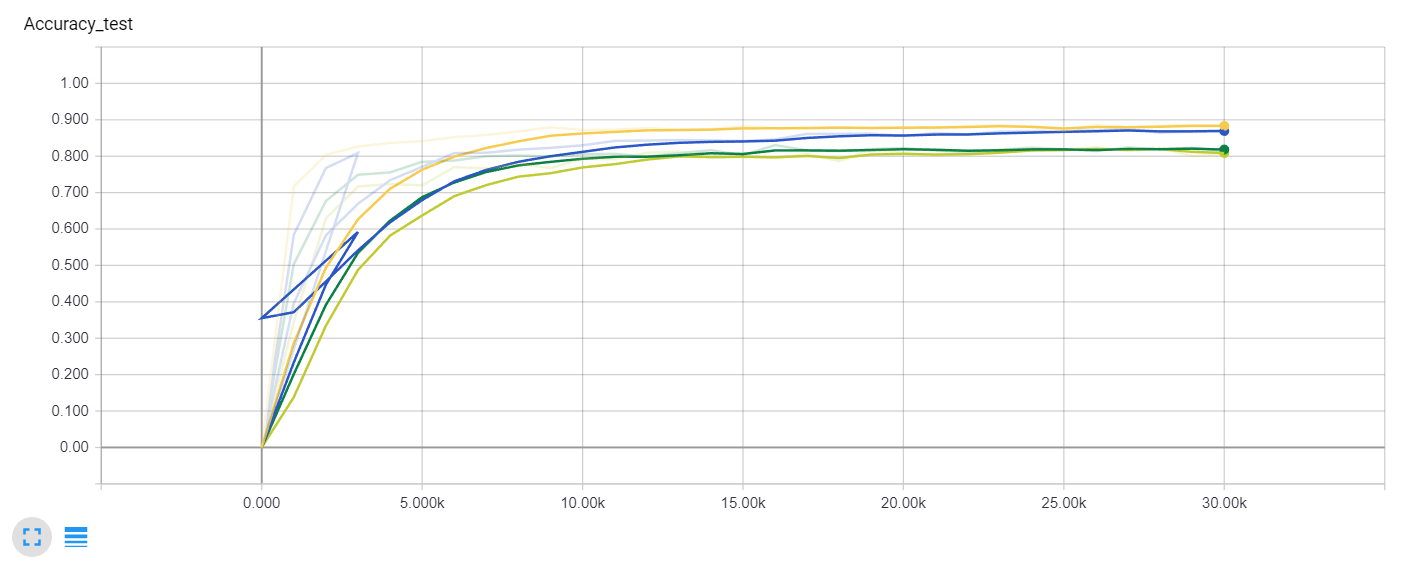

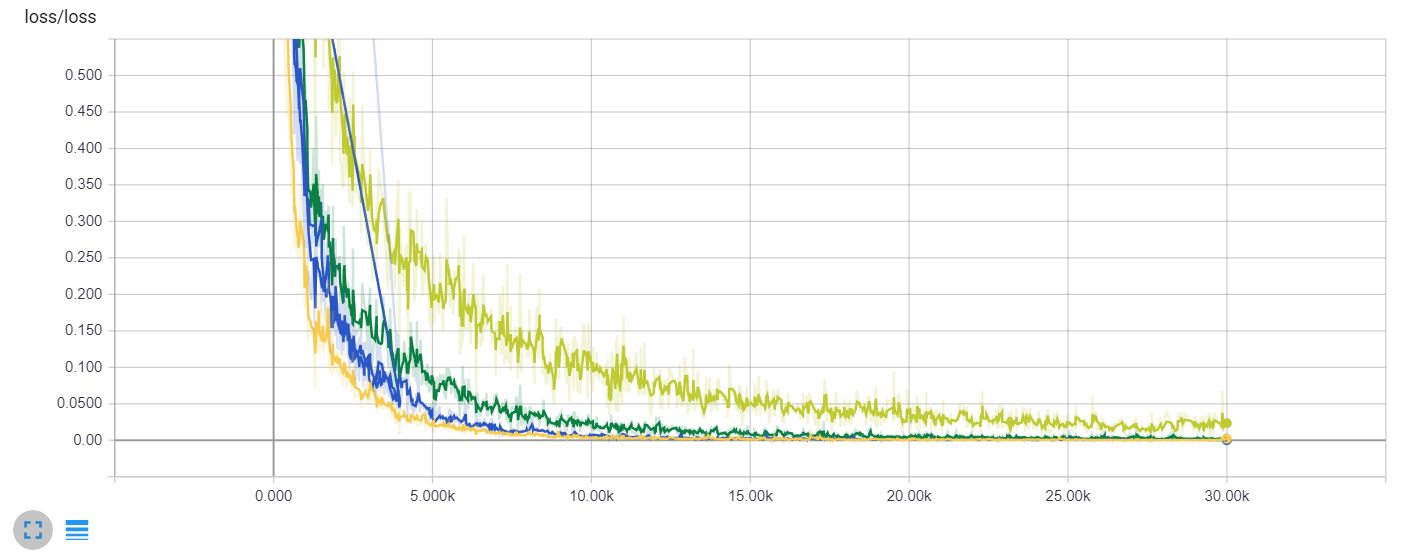

The curl in the blue lines are caused running the training again after the crash, after which I didn’t delete the old tensorboard file.

Train accuracy comparison (Yellow – batch normalization, Blue – double architecture; Light green – deeper architecture; Dark green – overlapping stride)

Validation accuracy comparison (Yellow – batch normalization, Blue – double architecture; Light green – deeper architecture; Dark green – overlapping stride)

Test accuracy comparison (Yellow – batch normalization, Blue – double architecture; Light green – deeper architecture; Dark green – overlapping stride)

Loss comparison (Yellow – batch normalization, Blue – double architecture; Light green – deeper architecture; Dark green – overlapping stride)

Conclusion

The deeper network doesn’t automatically means better accuracy, because the overfitting starts to be a big issue. The overlapping stride doesn’t help in this task. The batch normalization helps to achieve a better result.

I would like to thank my colleague and friend Roman for giving me useful tips.

Next experiments are described in the part two:

Hi Petr your work is absolutely excellent and amazing, I am a student who’s currently try to do the same work as you do, I wonder if there are any helpful resource you feel definitely worth mentioning while doing this project? I would love to know what helps you finish this amazing project!

Hello. Thank you! I mainly used two resources: Convolutional Neural Networks for Visual Recognition (http://cs231n.github.io/) and Udacity course called Deep learning (https://eu.udacity.com/course/deep-learning–ud730). If you want to go even deeper, you can read papers mentioned in https://adeshpande3.github.io/The-9-Deep-Learning-Papers-You-Need-To-Know-About.html

Thank you for the response! I’ll definitely dive into that. Wish you all the luck!

I also wonder how you build the graphs for test losses and accuracy? I guess those graph about your neural net’s structure is generated by tensorboard, are those graphs also generated by tensorboard or just pyplot? Thanks in advance.

I plotted the graphs by tensorboard. Then I used good old print screen button.

Hello Petr,

I am currently working on android application that recognizes numbers from images. Your work seems to be fitting well, however I cannot find any example how to evaluate the trained network with example images nor how to integrate it with Android app (like TensorFlow for poets, etc.).

Is it possible to get some support here ?

Regards,

Jakub

Hi Jakub,

I don’t have any experience with Tensorflow on Android. This is something I can’t help with right now.